Chapter Two: Foundational Skills

Usability evaluation

One of the key components of my curriculum is usability testing. This involves teaching students how to evaluate their work to see if it makes sense to other people, and to see if they can use it effectively without encountering errors. When students first start to make things, they have a hard time seeing their creations from the perspective of another person. They think, because they understand it, someone else will too. This is a form of “expert blindspot.” Usability testing sheds light on places where the expert blindspot (or, simply lack of experience) has led to poor design decisions.

I teach two main forms of usability testing.

Think Aloud Testing

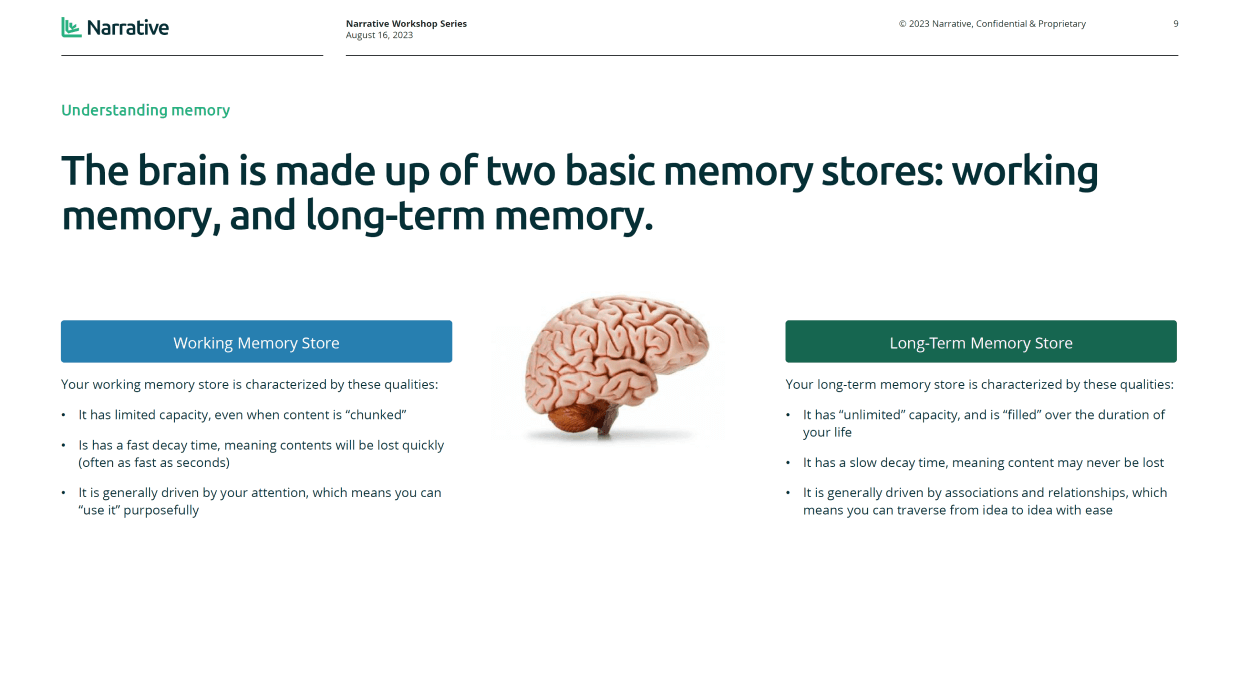

First, I teach a method called Think Aloud testing. This is a simple technique: have a person use a product to try to achieve their goals, and have them talk out loud while they use it. It’s a simple technique, but there’s more to the method than meets the eye. By talking out loud while accomplishing a task, a person is articulating the contents of their working memory. As long as the facilitator doesn’t prompt them with queues that lead to introspection, the talking gives a great view of how the participant thinks about the new design.

Queues that lead to introspection would look like this: “Why are you doing that?” or “What did you expect to happen there?” It’s tempting to ask these questions, but participants can’t answer them effectively. Introspection changes the contents of working memory - it alters how the participant actually goes about solving the problem.

So, during the evaluation, students simply prompt the user to “please keep talking” if they fall silent for more than a few seconds. This ensures a continual stream of comments, and gives students a very clear view of where their designs are hard to use.

This form of testing can be done with any fidelity prototype, even hand drawings on paper. When students are designing digital products, like websites or phone apps, they prepare each screen in a flow on a different piece of paper, and swap the screens out one at a time as the participant points at various elements on the paper. We practice in class. It takes a fair amount of organization prior to running an evaluation like this, because students need to prepare each screen and then place them in an order where they can easily and quickly reach them. By running a “test of the test” with other students, they can become more familiar with the test methodology itself and with their prototype materials.

I’ve noticed that some students are reluctant to show their design to a user if they don’t feel that it is perfect. I help them see the benefit of constant (and early) testing by reinforcing how quick a change can be made during early stages of design. When a design is still a marker sketch on a sheet of paper, changes can be made simply by crossing things out. This is a cheap and fast way towards improvement, and when students realize how much time it saves them to test early in their design process, they embrace this form of testing.

Heuristic Evaluation

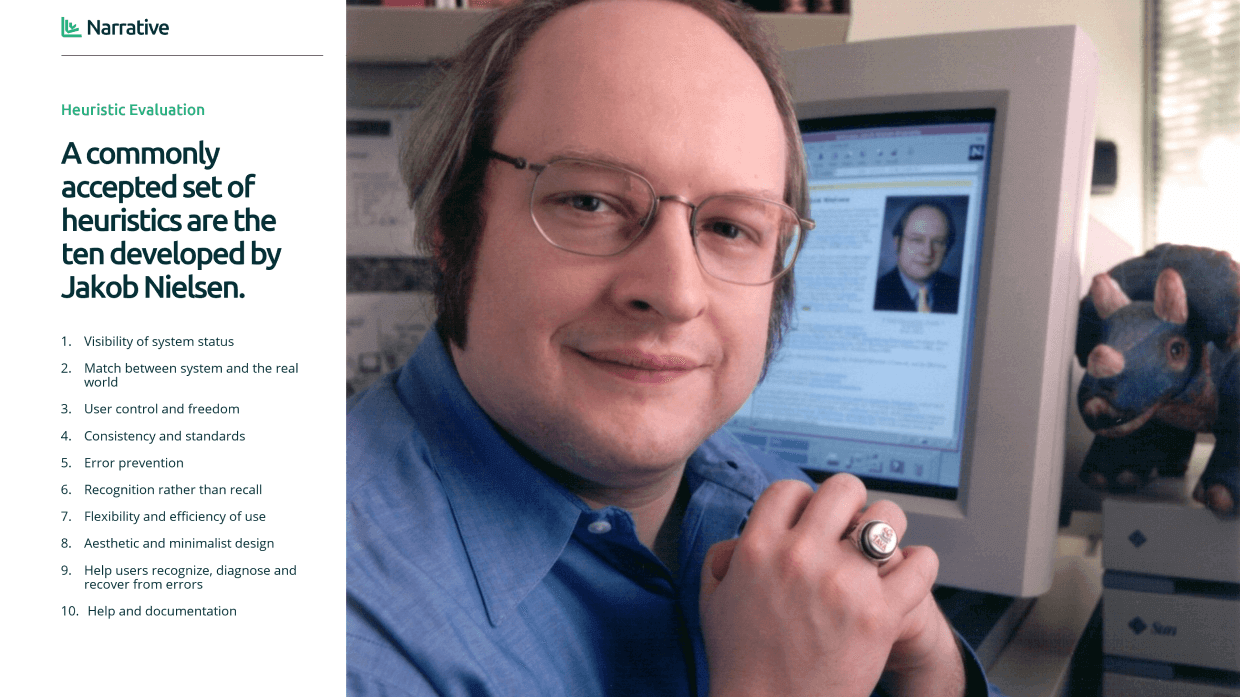

In addition to Think Aloud testing, students learn a second, supplementary form of testing called Heuristic Evaluation. While Think Aloud testing requires end users, Heuristic Evaluation is a form of expert review. Students compare their interface to a series of best practices, identify places where it doesn’t comply with these practices, and propose changes based on the misalignment.

Heuristic Evaluation relies on design principles that are well established in industry. Some of these focus on the same types of problems identified by Think Aloud testing, things like language misalignment or lack of help and documentation. The benefit of Heuristic Evaluation is that it doesn’t require users, which makes it a faster technique to learn and practice. Students simply inspect what they made and compare it to the heuristics. It takes less time, and identifies a number of usability issues.

However, the method alone fails to identify major cognitive misalignments in a design. It doesn’t typically identify large navigation problems that confuse users. It also doesn’t help students hear about how users think about their interface, so students may be less likely to take the results seriously. There’s something really impactful for students to see an actual person struggle with their design. It resonates on an emotional level, students are more likely to make changes to their work when they observe real people.

Cognitive Walkthrough

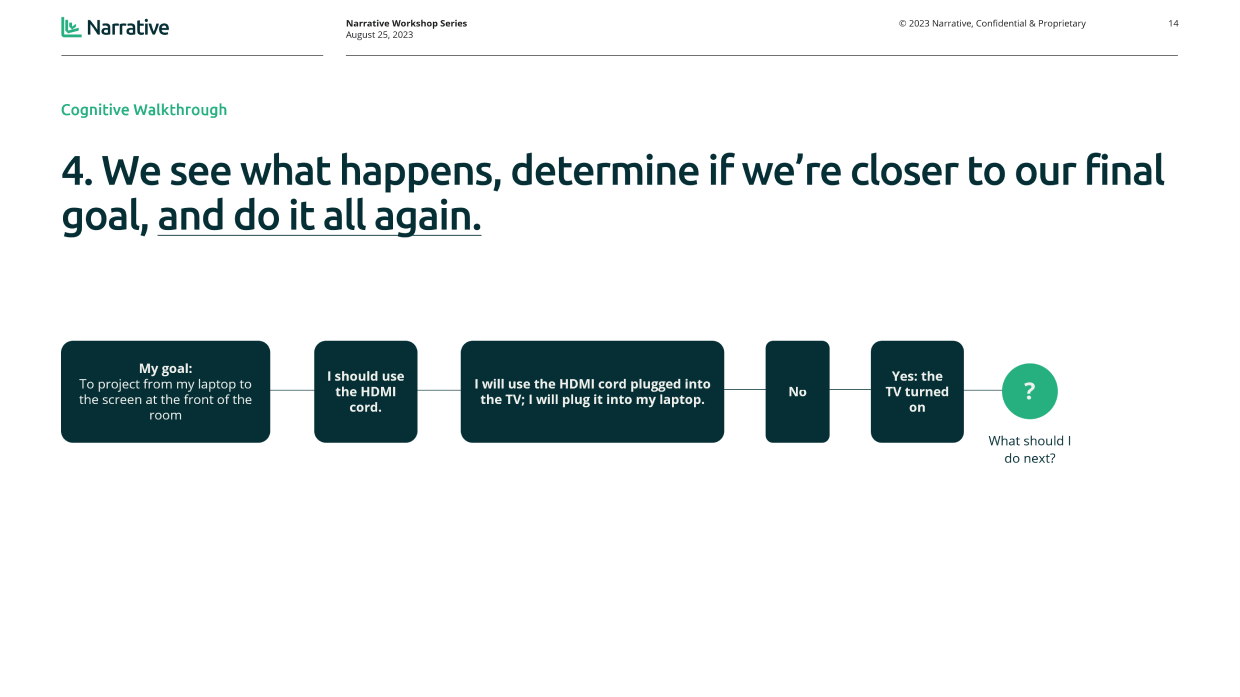

Sometimes, I teach a third method, called Cognitive Walkthrough. This method is particularly useful for evaluating the usability of a system for a novice. It helps designers identify where they've made interface design decisions that assume prior knowledge, and forces them to re-evaluate these decisions from the perspective of a new user.

Usability testing can be done throughout the curriculum. It can be performed any time the student has made something. As part of our user-centered curriculum, I encourage students to test early and often and include testing as a regular part of their process.